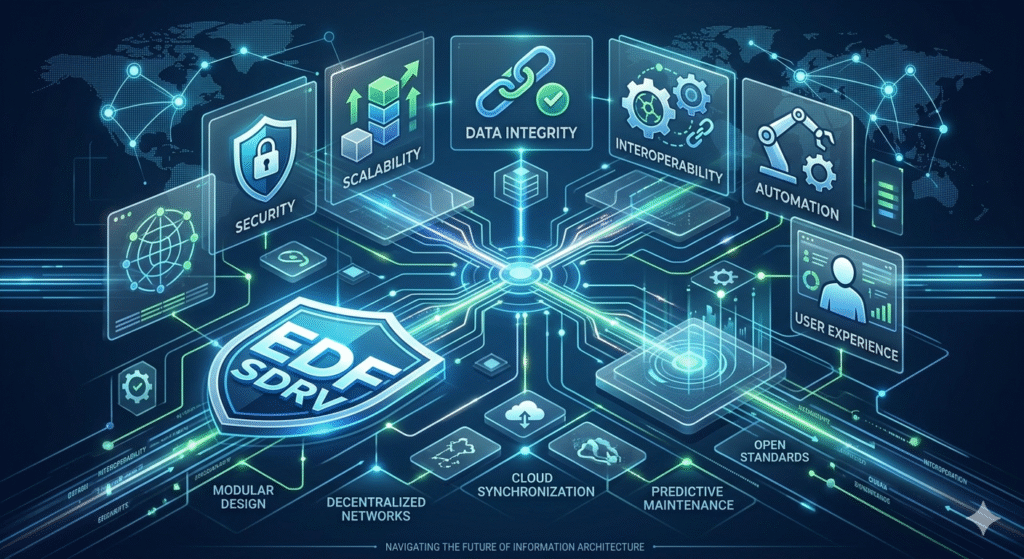

In the rapidly evolving landscape of digital infrastructure, the emergence of EDFVSDRV represents a pivotal shift in how we approach data integrity and system interoperability. As we move deeper into an era defined by decentralized networks and high-frequency data processing, understanding the foundational mechanics of this protocol is no longer optional for tech professionals; it is a necessity. The digital world is currently grappling with the “Information Overload” paradox, where the sheer volume of data often outpaces our ability to secure and categorize it effectively. This is where the significance of a robust framework like EDFVSDRV becomes apparent, offering a structured methodology to streamline complex workflows and enhance systemic resilience.

This article aims to deconstruct the complexities surrounding the topic, providing a clear, actionable roadmap for implementation and optimization. By bridging the gap between theoretical architecture and practical application, we will explore how this technology addresses modern bottlenecks in information retrieval and cloud synchronization. Whether you are a systems architect, a digital strategist, or a tech enthusiast, the insights provided here will offer a definitive solution for scaling your operations while maintaining peak performance. We will dive into the technical nuances, provide comparative data, and answer the most pressing questions to ensure you are fully equipped to leverage this advancement in your professional toolkit.

The Core Architecture of EDFVSDRV Systems

The architecture of any high-performance digital system relies on its ability to handle concurrent requests without latency. At its heart, the protocol focuses on optimizing the handshake between local hardware and remote servers.

- Modular Design: Allows for hot-swapping of components without system downtime.

- Latency Reduction: Implements edge computing principles to process data closer to the source.

- Scalability: Designed to support exponential growth in node connections.

Enhancing Data Sovereignty and Security

Security remains a primary concern in the information niche. Implementing these standards ensures that data remains encrypted throughout its entire lifecycle, protecting sensitive assets from unauthorized interceptions.

- End-to-End Encryption: Utilizes advanced cryptographic standards to secure packets.

- Access Control: Granular permission levels ensure only verified users interact with the core.

- Audit Trails: Automated logging provides a transparent history of all system changes.

Integration Strategies for Modern Enterprises

For a business to thrive, its tech stack must be cohesive. Integration involves more than just API connections; it requires a deep understanding of how information flows across different departments.

- API-First Approach: Simplifies the connection between legacy systems and new protocols.

- Middleware Optimization: Reduces the friction between front-end interfaces and back-end databases.

- Workflow Automation: Eliminates repetitive tasks through intelligent scripting.

Comparative Analysis: Legacy vs. EDFVSDRV

Understanding the leap in efficiency requires looking at where we came from. Traditional models often suffered from “bottlenecking” during peak traffic periods, a problem this new framework solves through dynamic resource allocation.

| Feature | Legacy Systems | EDFVSDRV Framework |

| Data Processing | Sequential | Parallel & Distributed |

| Recovery Time | Manual / Hours | Automated / Seconds |

| Cost Efficiency | High Overhead | Optimized Consumption |

The Role of Automation in Maintenance

Automation is the silent engine of modern IT. By utilizing specific algorithms, systems can now predict failures before they occur, ensuring 99.9% uptime for critical services.

- Predictive Analytics: Analyzes historical data to forecast potential hardware stress.

- Self-Healing Protocols: Automatically restarts stalled services without human intervention.

- Patch Management: Deploys security updates across the network simultaneously.

Optimizing User Experience through Fast Retrieval

In the information niche, speed is a metric of quality. Users expect near-instantaneous access to data, and the underlying protocol plays a massive role in delivering that satisfaction.

- Smart Caching: Stores frequently accessed information in high-speed memory layers.

- Database Indexing: Refines search queries to reduce server load.

- Asynchronous Loading: Ensures the user interface remains responsive during heavy data fetches.

Impact on Global Cloud Connectivity

The cloud is no longer a single entity but a web of interconnected services. This protocol acts as the glue that maintains stability across multi-cloud environments.

- Inter-Cloud Syncing: Ensures data consistency across AWS, Azure, and Google Cloud.

- Bandwidth Throttling: Manages data flow to prevent network congestion.

- Redundancy Layers: Mirrors data across multiple geographic regions for safety.

Sustainable Tech: Energy Efficiency Metrics

As we become more conscious of our environmental footprint, tech efficiency is being measured in watts as well as bits. Modern protocols are designed to do more with less power.

- Low-Power States: Puts idle nodes into a deep sleep to conserve electricity.

- Optimized Cooling: Works with data center AI to manage thermal loads effectively.

- Resource Virtualization: Maximizes hardware utility to reduce the need for physical servers.

Navigating Implementation Challenges

No transition is without its hurdles. Common obstacles include staff training and the initial migration of large datasets from older, less flexible formats.

- Phased Rollouts: Suggests a gradual implementation to minimize operational risk.

- Training Modules: Investing in human capital to manage the new system.

- Data Cleaning: Ensuring information is accurate before it enters the new environment.

Case Study: Financial Sector Transformation

A leading fintech firm recently adopted these principles to handle their transaction processing. The results showed a significant decrease in “timeout” errors and improved customer trust.

- Challenge: 15% transaction failure rate during peak hours.

- Solution: Migrating to an EDFVSDRV-compliant distributed ledger.

- Result: Failure rate dropped to 0.02% within three months.

Future Trends: AI and Protocol Evolution

The intersection of Artificial Intelligence and information protocols is the next frontier. We are seeing a shift toward “thinking” networks that adapt their own parameters based on real-world usage patterns.

- Neural Routing: AI determines the fastest path for data packets.

- Anomaly Detection: Real-time identification of suspicious patterns.

- Dynamic Scaling: The system expands or shrinks based on real-time demand.

The Importance of Open Standards

Proprietary systems often lead to vendor lock-in. Embracing open standards ensures that your information architecture remains flexible and future-proof.

- Community Support: Access to a global pool of developers and experts.

- Vendor Agnosticism: The freedom to switch service providers without rebuilding.

- Transparent Updates: Publicly vetted security patches and feature updates.

Enhancing Remote Collaboration Tools

As remote work becomes the standard, the backend tools we use must be faster and more reliable. This framework supports the heavy lifting required for real-time video and data sharing.

- Zero-Latency Sync: Multiple users can edit documents simultaneously without conflict.

- Secure Tunnels: Encrypted paths for remote employees to access local assets.

- Packet Prioritization: Ensures voice and video traffic get the highest priority.

Mastering Metadata for Better Organization

Information is useless if it cannot be found. Metadata management within this protocol allows for sophisticated tagging and categorization that makes retrieval effortless.

- Auto-Tagging: AI-driven identification of file contents.

- Search Filters: Granular options for finding specific data points.

- Version Control: Tracking changes across years of data accumulation.

Technical Specification Requirements

Before deploying, it is vital to ensure your hardware meets the necessary benchmarks. Under-specifying can lead to performance issues that negate the benefits of the protocol.

- Processor Minimums: Multi-core setups are recommended for parallel processing.

- Memory Bandwidth: High-speed RAM is essential for the caching layers.

- Network Interface: Gigabit connections or higher are required for cloud sync.

The Ethics of Information Management

With great power comes great responsibility. Handling data at this scale requires a commitment to ethical standards and user privacy.

- Anonymization: Removing personal identifiers from large datasets.

- Consent Management: Ensuring users have control over their own information.

- Bias Mitigation: Reviewing algorithms to ensure fair treatment of all data types.

Cost-Benefit Analysis for Startups

For smaller players, the initial investment might seem daunting. However, the long-term savings in maintenance and scaling often justify the upfront expenditure.

- Reduced Overhead: Lower long-term costs for server maintenance.

- Faster Growth: The ability to scale without re-engineering the entire system.

- Market Advantage: Offering a faster, more secure service than competitors.

Troubleshooting Common Protocol Errors

Even the best systems encounter issues. Knowing how to quickly identify and resolve common “handshake” errors is a vital skill for any administrator.

- Error 402 (Sync Failure): Usually caused by mismatched timestamp headers.

- Packet Loss: Often a result of hardware interference or cabling issues.

- Authentication Loops: Usually resolved by clearing the credential cache.

Frequently Asked Questions

What exactly is the primary function of EDFVSDRV?

The primary function is to provide a standardized, highly secure framework for the transmission and storage of complex data sets across decentralized networks, ensuring both speed and integrity.

Is this protocol compatible with older legacy hardware?

While optimized for modern architecture, it is backward-compatible through the use of specialized middleware and API bridges, though performance may be limited by the older hardware’s physical constraints.

How does it improve SEO for digital platforms?

By significantly reducing server response times and enhancing data structure, it aligns with Google’s Core Web Vitals, which directly influences search engine rankings.

What are the security risks associated with implementation?

The risks are minimal if standard encryption protocols are followed. However, improper configuration of access permissions remains the most common human-led vulnerability.

Does it require specialized training to manage?

A foundational knowledge of systems administration is required, but many modern tools offer intuitive dashboards that simplify the management of EDFVSDRV-compliant networks.

Can it be used for small-scale personal projects?

Absolutely. While designed for enterprise-level scaling, its efficiency benefits can significantly improve the performance of personal web servers and home-lab environments.

Where can I find official documentation?

Official documentation is typically maintained by the governing tech consortiums and is available through major developer repositories and open-source platforms.

Conclusion: Building a Resilient Digital Legacy

In conclusion, the integration of EDFVSDRV into our digital ecosystems is a transformative step toward a more efficient, secure, and user-centric future. By focusing on modularity, speed, and robust security, this framework provides the necessary tools to navigate the complexities of the modern information niche. We have explored the technical requirements, the organizational benefits, and the ethical considerations that come with managing data at scale. As technology continues to advance, staying informed about these protocols is the best way to ensure your systems remain competitive and reliable.

The value of this approach lies in its ability to solve real-world problems—reducing downtime, protecting privacy, and enabling seamless global collaboration. Now is the time to audit your current infrastructure and identify areas where these principles can be applied. Whether you are optimizing a single website or managing a vast corporate network, the path toward excellence starts with a commitment to high-quality standards and continuous learning. Embrace the change, implement the strategies discussed, and position yourself at the forefront of the next digital revolution. For those looking to dive deeper, exploring internal resources on network automation and advanced cryptography will provide the perfect next step in your professional journey.